Online | 6 Months

Data Science Bootcamp

Your future career starts here. Study online, with flexible payment options and the full support of industry pros and career mentors.

Flexible payment options

Online, part-time

Introduction to Data Science

Build a career in an exciting, in-demand profession.

Data Scientists use math and coding to solve complex business problems and predict future outcomes.

Average salary increase

$17k

Common Job Titles

Data Scientist

Data & Analytics Manager

Machine Learning Engineer

Data Architect

Database Engineer

Job Skills

Statistics

Calculus

Computer Programming

Data Visualization

Machine Learning

Average salary increase for grads is based on our Outcomes report, which you can find here.

Results reflect a Thinkful online survey conducted among Thinkful graduates who reported an in-field job between September 2018 and February 2020. Respondent base (n=232) among 336 graduate invites. (Sample size represents this population of customers within a margin of error of 6.4% at 95% confidence.) Survey responses are not a guarantee of any particular results as individual experiences may vary. The survey was fielded between February 16th and February 28th, 2021. Graduates invited to the survey were offered a $25 gift card.

Big names hire Thinkful grads.

Flexible options to fund your tech career.

Our payment plans are built around your needs. Whether you can finance your studies upfront, or want to pay us back when you've secured a job, we've got you covered.

Curriculum

Job-ready skills employers are looking for.

01

Analytics and Experimentation

You'll learn the basics of using Python to find real insights from data you have, SQL for querying, as well how to design and run your own experiments to gather new data.

02

Machine Learning: Supervised Learning

Time to build your first models. You'll start in machine learning with supervised models, covering everything from the classics to cutting-edge techniques.

03

Machine Learning: Unsupervised Learning

The second largest branch of machine learning, here we'll cover various forms of unsupervised models, useful for things like feature reduction and clustering.

04

Specializations

Data science is a wide field. Specializations will help you stand out. In this section, you'll learn more advanced skills to help you work with certain data types.

Select a course format and enter your email to view the entire syllabus.

Schedule Options

Online learning. Personal support.

Fit study into the rest of your life as you learn part-time.

Part-Time

Study independently, with lots of flexibility.

Tuition Payment Options

Our plans fit your needs.

Program discounts are available to veterans and eligible individuals. Ask your Admissions Rep to find out if you qualify.

For more detail, take a comprehensive look at all of our payment options here.

Take the first step to a new career. Book a call with Admissions.

Schedule a callSee how we compare to other bootcamps.

1-on-1 Mentorship

Get matched with a personal mentor who works in the industry.

Learn from their invaluable real-world career experiences.

Live personal video consultations

Detailed feedback and reviews

Career insights and tips

On-demand Technical Coaching

Connect with our expert educators in real-time with our live chat service. Ask questions and get feedback, fast.

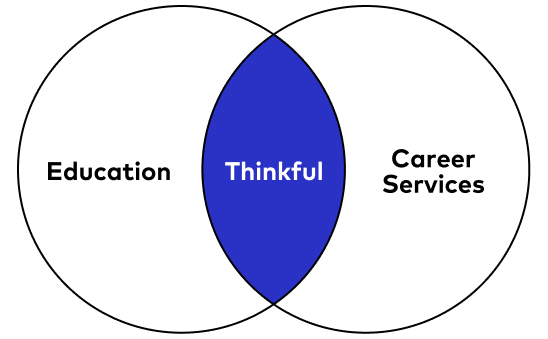

Career Services

Personalized career coaching.

By your side every step of the way.

Full-time career support from day 1

Unlimited technical interview practices

Industry and salary insights

Linkedin and resume writing support

Exclusive access to open roles

Enrollment

Your new career begins here.

Enter your email address to apply for the Data Science course.

Student Reviews

Alexa Scott, Thinkful Grad

Current Job: Rio SEO

The connections I made were definitely one of my favorite things about Thinkful. Whether it be with students, TAs, or mentors, I am grateful for all of the new relationships.

FAQs

Please reach out to our admissions team to find the latest start dates on the corresponding course page of interest.

We offer Flex programs (part-time) for all of our courses. Please visit Thinkful.com to find the latest start dates on the corresponding course page of interest.

Instructors are chosen based on their academic credentials, relevant industry experience, and teaching ability. Thinkful collects weekly feedback from students and staff on program curriculum, projects, and overall student experience in order to evaluate the quality of each program. In addition to student experience, Thinkful also considers industry demand for particular skill sets and success rates with each program in order to look for areas of improvement, ensuring that each program has successful outcomes that matches Thinkful’s mission on a quarterly basis.

The minimum requirements to serve as a mentor, technical expert, or faculty for all Thinkful programs include:

3+ years of relevant industry experience

Demonstration of genuine student advocacy and empathy for beginners

Exceptional written and verbal communication skills

You are fully supported from the moment you join Thinkful with a comprehensive, personalized approach to your success that means that while you’re learning online, you’re never alone. Regardless of the program you choose, you’ll have access to:

1:1 Mentorship -- you’ll be assigned a mentor whose focus is on getting you hired and helping you build a profile that best shows off your skills and strengths

Technical coaches/Live Chat -- in addition to technical coaching and office hours sessions, our newly launched virtual messaging platform means you can get answers to study-related questions in real time.

Access to a Slack community -- you’ll have plenty of chances to pair with and learn from your fellow students in a safe, fun, online environment.

As of now, mentors are assigned by Thinkful based on fit and availability and cannot be chosen by students. The minimum requirements to serve as a mentor is 3+ years of relevant industry experience, demonstration of genuine student advocacy and empathy for beginners and exceptional written and verbal communication skills.

Thinkful is dedicated to educating and connecting students to career opportunities via curated workshops and post-graduation support. The Careers team at Thinkful empowers students through a host of programming and resources that are aimed at career advancement as well as transparent outcomes. We provide career support in the form of

Individual and group sessions

Mock behavioral and technical interviews

Curated technological content

Thematic workshops and career-focused Q&As, topics for which include but are not limited to networking, technical landscape, resume and LinkedIn reviews, cover letter writing, negotiating, navigating the job search, and interview preparation

Thinkful programs require a computer with a microphone, speakers, webcam, and high-speed internet access. We do not provide you with a computer, and every student must own or have access to a personal computer with at least 16GB RAM, at least 2.0 GHz processor (GPUs are not a requirement), and at least 256 GB HD. Additionally, the computer must be able to download applications and software; computers like Chromebooks will not work.

For full-time programs, you're required to additionally provide the following equipment at your own cost:

A reliable Mac or PC with video capability including a webcam and microphone. Headphones are highly recommended. Macs must have the most current OS version installed, and PCs must be using Windows 10 or newer operating systems.

Reliable internet connection fast enough to stream video sessions clearly for upwards of 8 hours a day.

Additionally, all Data Analytics courses require Excel in order to start the course. You can find more information in the course catalog.